Scalable A/B testing tools are critical for businesses looking to run high-volume experiments efficiently. They help teams transition from a few tests per quarter to hundreds annually, ensuring faster insights and better decision-making. These platforms integrate with existing data systems, reduce manual processes, and utilize advanced statistical methods like CUPED and sequential testing for accurate results. Key benefits include:

- Higher Testing Velocity: Top companies run over 500 experiments per year, uncovering rare wins faster.

- Advanced Features: Techniques like CUPED cut experiment runtimes by up to 50%, while sequential testing allows real-time monitoring without inflated errors.

- Unified Data: Warehouse-native platforms like Statsig and GrowthBook ensure data accuracy by connecting directly to systems like Snowflake or BigQuery.

- Business Impact: Experiments shift focus from surface metrics (e.g., clicks) to deeper KPIs like revenue per visitor and customer lifetime value.

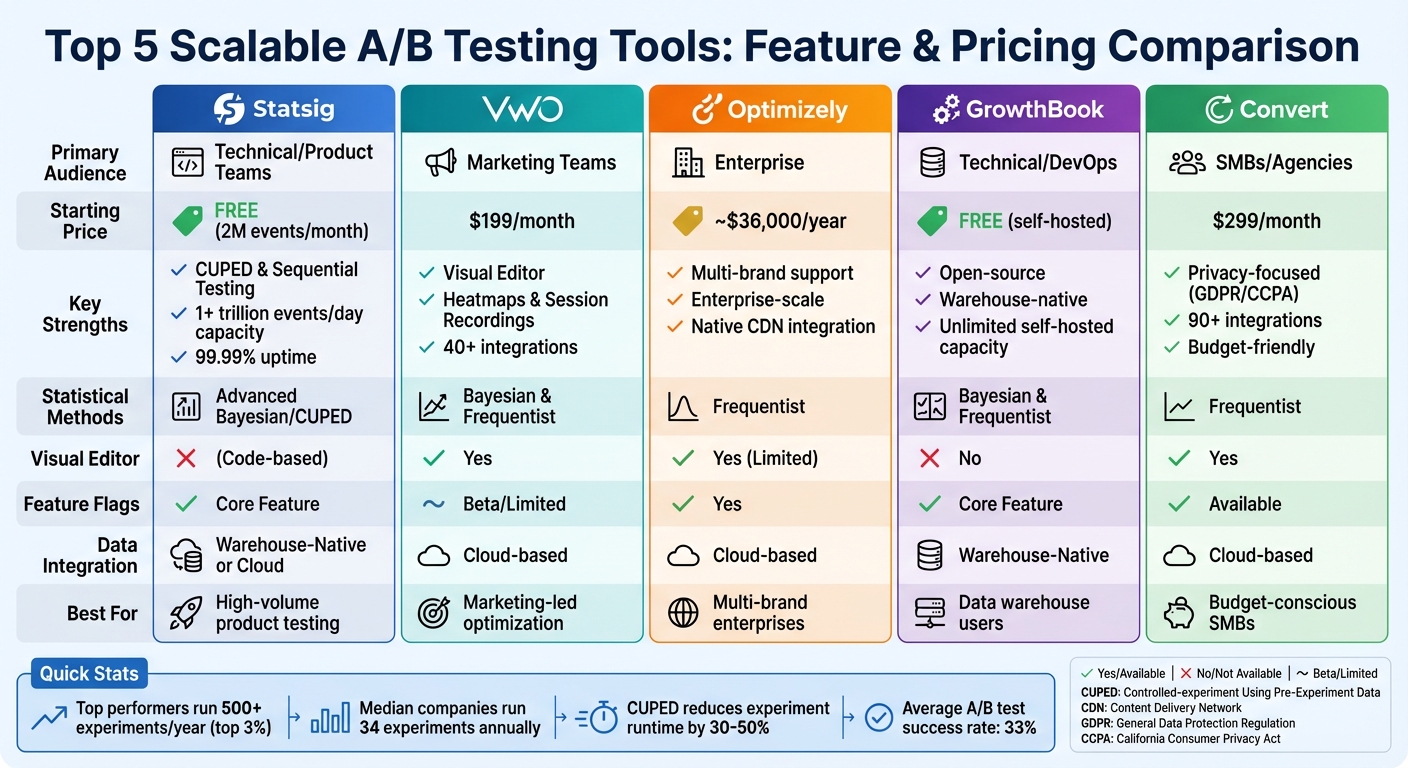

Quick Comparison of Top Tools

| Tool | Audience | Key Features | Starting Price | Ideal For |

|---|---|---|---|---|

| Statsig | Product/Engineering | CUPED, Sequential Testing | Free (2M events/month) | High-volume testing |

| VWO | Marketing Teams | Visual Editor, Heatmaps | $199/month | Marketers needing simplicity |

| Optimizely | Enterprises | Multi-brand support | ~$36,000/year | Complex enterprise needs |

| GrowthBook | Technical Teams | Open-source, Warehouse-native | Free (self-hosted) | Teams with modern data stacks |

| Convert | SMBs/Agencies | Privacy-focused | $299/month | Budget-conscious businesses |

Scalable A/B testing tools eliminate bottlenecks, improve collaboration, and directly tie experiments to revenue outcomes. Whether you’re a small business or a global enterprise, choosing the right platform depends on your team’s technical expertise, budget, and testing goals.

A/B Testing at scale: Building an A/B testing culture from the ground up – EvolveDigital NYC 2025

Core Features of Scalable A/B Testing Tools

What sets scalable A/B testing platforms apart from basic tools? Features like centralized analytics, advanced statistical techniques, and multi-platform integration are at the heart of their functionality. These capabilities don’t just improve efficiency – they can transform your testing program into a key competitive edge.

Centralized Analytics and Experiment Management

Scalable platforms offer unified dashboards that bring all your data together. For instance, warehouse-native analytics can connect directly to data warehouses like Snowflake or BigQuery, providing a single, reliable source of truth. This eliminates discrepancies in data and ensures everyone is working with the same information.

Centralized management tools also help streamline workflows. Features like automated approval routing, Kanban boards, and structured idea capture forms prevent experiments from being forgotten in email threads or Slack messages. These tools keep things organized, speeding up test execution and boosting overall productivity.

Self-service interfaces are another game-changer. They reduce the workload for data scientists by handling routine tasks. For example, Brex cut their data science workload by 50% after integrating A/B testing, feature flags, and analytics into Statsig’s unified platform. They also managed to cut costs by 20% by eliminating redundant tools.

Other advanced features, like global holdouts, allow teams to track the cumulative impact of their experiments over time.

Now, let’s dive into the statistical methods that make these platforms so effective.

Statistical Methods for Accurate Results

Accurate and efficient decision-making in A/B testing relies on advanced statistical techniques. One such method, CUPED (Controlled-experiment Using Pre-Experiment Data), incorporates historical user behavior into the analysis. This approach reduces variance, allowing teams to achieve statistical significance 30% to 50% faster, often with smaller sample sizes.

Sequential testing is another powerful tool. Unlike traditional methods that require fixed sample sizes and predetermined end dates, sequential testing allows for continuous monitoring. This eliminates the "peeking problem", where early data checks can lead to inflated false positives. Teams can either conclude tests early when results are clear or extend them for more data.

Bayesian models offer yet another approach, providing direct probability statements that many find easier to interpret. This clarity often results in faster decision-making.

For more complex scenarios, multivariate testing enables teams to test multiple variations simultaneously. Research shows that tests with four or more variations are 2.4 times more likely to succeed and deliver 27.4% higher improvements compared to standard A/B tests. However, these tests require larger sample sizes and advanced statistical engines to ensure accuracy.

Multi-Platform and Real-Time Testing

Scalable A/B testing tools go beyond analytics and statistical methods – they ensure seamless testing across all user touchpoints. Today’s customers interact with brands through websites, mobile apps, emails, and social media. Omnichannel testing ensures consistency across these platforms, which can lead to personalized experiences delivering 41% greater impact.

Modern platforms support this with native SDKs for iOS and Android, alongside web testing capabilities, allowing teams to run coordinated experiments across multiple channels.

Real-time capabilities are critical, too. Features like real-time monitoring and feature flags allow teams to respond quickly – whether rolling back a change when metrics decline or deploying updates instantly.

Edge computing is another essential feature. By running experiments at the edge of content delivery networks, platforms eliminate the "flicker" effect caused by client-side script changes. This ensures high performance, even for users spread across different geographies.

Multi-armed bandit algorithms offer a dynamic approach to traffic allocation during tests. Instead of waiting for the test to conclude, these algorithms shift traffic toward winning variations in real time, maximizing conversion gains. However, careful implementation is key to avoid jumping to premature conclusions.

Finally, guardrail metrics act as a safety net. They monitor critical indicators – like error rates, latency, and user experience metrics – to ensure that gains in one area don’t come at the expense of others. Real-time tracking helps teams identify and address issues before they affect large numbers of users.

Top Scalable A/B Testing Tools Compared

Scalable A/B Testing Tools Comparison: Features, Pricing & Best Use Cases

Let’s dive into a detailed comparison of some leading A/B testing tools. Picking the right one can feel like a juggling act – balancing cost, usability, and performance. Whether you’re a marketer, developer, or enterprise team, each tool has its own strengths tailored to specific needs. Understanding how these tools align with your team’s expertise and infrastructure is key to building a reliable testing program.

Tool Overviews and Use Cases

Statsig is a favorite among technical teams looking for enterprise-level features without the hefty price tag. This platform handles over 1 trillion events daily with an impressive 99.99% uptime. Designed for product and engineering teams, it includes advanced features like CUPED and sequential testing. Notion, for instance, transitioned from an in-house tool to Statsig, dramatically increasing their experiment volume. Pricing is usage-based, offering a free tier for up to 2 million events per month, with additional events costing about $0.0004 each.

VWO is built for marketers who need a visual editor to run tests without relying on developers. It also includes qualitative tools like heatmaps and session recordings, providing insights into why certain variations perform better. VWO offers a free plan for up to 50,000 visitors per month, with paid plans starting at $199/month. It integrates seamlessly with over 40 tools, including HubSpot, Salesforce, and Google Analytics 4. This makes it an excellent choice for marketing-driven teams.

Optimizely is the go-to option for enterprises managing complex needs across multiple brands. For example, Cox Automotive improved its experimentation program’s health score by 27% in one quarter after adopting Optimizely to manage tests across brands like Kelley Blue Book. It connects natively with platforms like Adobe Analytics, Google Analytics 4, and CDNs such as Cloudflare and Akamai. However, with pricing starting around $36,000 and going beyond $50,000 annually, it’s often out of reach for smaller businesses.

GrowthBook offers an open-source, warehouse-native solution. Its core platform is free for self-hosted deployments, while managed services cost $20 per user per month. This makes it appealing for technical teams with modern data stacks like Snowflake or BigQuery. Its warehouse-native model ensures data accuracy and sovereignty, eliminating discrepancies between testing platforms and analytics tools.

Convert Experiences is designed for agencies and small to medium-sized businesses. Starting at $299/month (billed annually) for up to 100,000 tested users, it integrates with over 90 tools and prioritizes privacy with GDPR and CCPA compliance. However, it lacks advanced statistical methods like variance reduction, which are available in platforms like Statsig.

Each tool has its strengths, and the best choice depends on your team’s technical skills and infrastructure. The table below offers a side-by-side comparison to help you decide.

Feature Comparison Table

| Feature | Statsig | VWO | Optimizely | GrowthBook | Convert |

|---|---|---|---|---|---|

| Primary Audience | Technical/Product Teams | Marketing Teams | Enterprise | Technical/DevOps | SMBs/Agencies |

| Visual Editor | No (Code-based) | Yes | Yes (Limited) | No | Yes |

| Statistical Methods | Advanced Bayesian/CUPED | Bayesian & Frequentist | Frequentist | Bayesian & Frequentist | Frequentist |

| Data Integration | Warehouse-Native or Cloud | Cloud-based | Cloud-based | Warehouse-Native | Cloud-based |

| Feature Flags | Core Feature | Beta/Limited | Yes | Core Feature | Available |

| Traffic Capacity | 1+ trillion events/day | Up to 50K visitors (free tier) | Enterprise-scale | Unlimited (self-hosted) | Up to 100K tested users |

| Starting Price | Free (2M events/month) | Free (50K visitors) | ~$36,000/year | Free (self-hosted) | $299/month |

| Best For | High-volume product testing | Marketing-led optimization | Multi-brand enterprises | Data warehouse users | Budget-conscious SMBs |

If your team has strong engineering resources and uses modern data stacks, Statsig or GrowthBook are great options. For marketing teams needing a user-friendly interface, VWO or Convert might be the better fit. Meanwhile, enterprises with complex infrastructures may find Optimizely worth the premium, though many teams discover that Statsig delivers similar capabilities at a fraction of the cost.

sbb-itb-2ec70df

How to Implement Scalable A/B Testing

Planning and Designing Experiments

To set up effective A/B tests, start with the PICOT framework. This one-page plan outlines the key elements of your experiment: Population, Intervention, Control, Outcome, and Time horizon. It helps eliminate confusion before launching your test. When crafting your hypothesis, make sure it’s clear, measurable, and testable. For example, instead of saying, "users will like the new button", go for something like, "changing the checkout button from blue to green will increase conversions among mobile users over two weeks".

Next, choose the right randomization unit for your test. For logged-in users, User IDs work best since they track behavior across devices. For anonymous traffic, Device IDs are more suitable, though they might count the same user multiple times if they switch devices. For one-time processes like guest checkouts, Session IDs are ideal. Before starting, calculate your Minimum Detectable Effect (MDE) to ensure your sample size is large enough to detect meaningful changes.

Don’t forget to define guardrail metrics alongside your main goal. For instance, if your focus is on boosting conversions, also keep an eye on metrics like page load speed and error rates to avoid unintended side effects. Additionally, make sure non-test variables are consistent across groups to maintain reliability.

Tracking and Analyzing Test Results

Once your experiment is set up, precise tracking is essential to turn raw data into meaningful insights. Link your tests directly to a data warehouse like Snowflake or BigQuery to monitor key business metrics such as Customer Lifetime Value and return rates. Break down the results by segments to uncover growth opportunities, and always check for Sample Ratio Mismatch (SRM) to ensure your data is valid.

Avoid ending tests too early, even if the early results look promising. With the average success rate of A/B tests hovering around 33%, drawing conclusions too soon can lead to false positives. Document every outcome in a centralized knowledge base. This not only prevents redundant testing but also builds a solid foundation for long-term strategies.

"A/B testing is not only humbling, it can dramatically improve decision making… shipping a product that won is a win, but so is not shipping a product that lost."

– GrowthBook Docs

Working with Growth-onomics for A/B Testing

Growth-onomics takes A/B testing to the next level by weaving it into broader marketing strategies. Through its Data Analytics services, it connects warehouse-native analytics with customer journey mapping. This allows experiment data on retention and lifetime value to directly inform models for user acquisition and experience improvements. In this way, A/B testing becomes more than just a standalone activity – it actively supports performance marketing, SEO, and conversion rate optimization efforts across all customer touchpoints. Teams conducting over 500 experiments annually rank among the top 3% of high performers, highlighting the power of combining rigorous testing with data-driven marketing strategies.

Choosing the Right Scalable A/B Testing Tool

Main Takeaways for Small Businesses

Before diving into A/B testing tools, it’s important to assess your team’s experience with experimentation, whether you need server-side testing, and your budget constraints. For small businesses, affordable options like Zoho PageSense (starting at $16/month) or Qualaroo (starting at $19.99/month) are great starting points. On the other hand, larger enterprises should prepare for integration and setup costs that can be two to three times the platform fee during the first year.

When evaluating tools, focus on their statistical capabilities. Features like CUPED (which reduces variance and can shorten experiment durations by 30% to 50%) and sequential testing (allowing for early decisions) are game-changers. Additionally, tools with outlier detection ensure more accurate results. If your team works with platforms like Snowflake or BigQuery, prioritize a warehouse-native solution that integrates seamlessly.

Scalability is another key factor. The tool you choose should work for both non-technical marketers – via user-friendly visual editors – and engineers, through features like SDKs and feature flags. This balance allows teams to run independent experiments without sacrificing statistical accuracy. Most experiments target just a handful of metrics – like CTA clicks, revenue, checkout completion, registration, and add-to-cart actions. To give you an idea, the median company runs 34 experiments annually, while top performers execute over 200.

Privacy and compliance are non-negotiable, especially for tests involving sensitive user data or checkout processes. Look for certifications like GDPR, CCPA, HIPAA, SOC 2 Type II, and ISO 27001. Tools like Convert Experiences, rated 4.7/5 on G2, are well-regarded for their privacy-first approach. Also, ensure your tool provides no-latency SDKs to avoid the "flicker effect", which can distort results and harm the user experience.

By considering these factors, you’ll set a solid foundation for a scalable and effective A/B testing program.

Next Steps for Growth

With your criteria in place, it’s time to take actionable steps to enhance your A/B testing strategy.

Start by signing up for a 15- to 30-day free trial to assess how well the tool fits your needs and how responsive their support team is. Involve key team members – like developers, designers, and product managers – from the beginning to spot any workflow challenges.

Once you’ve chosen a tool, dedicate the first 30 days to integrating it with your data warehouse and connecting it to critical business metrics. For companies aiming to combine rigorous testing with broader marketing goals, services like Growth-onomics Data Analytics can help. Their approach integrates warehouse-native analytics with customer journey mapping, allowing experiment data on retention and lifetime value to directly influence strategies like user acquisition, SEO, and conversion rate optimization. This approach transforms A/B testing into a key driver of performance marketing success.

FAQs

What are the main advantages of using scalable A/B testing tools?

Scalable A/B testing tools make it possible for teams to run multiple experiments at the same time without sacrificing accuracy or statistical reliability. By automating tasks like calculating sample sizes, tracking confidence levels, and analyzing results, these tools ensure experiments provide meaningful insights – even when dealing with high traffic. This approach minimizes the chances of false positives or negatives and speeds up data-driven decision-making.

Built for large-scale operations, these tools integrate effortlessly with data warehouses and analytics platforms, offering real-time insights that tie experiment results directly to key metrics such as revenue. Advanced features like multivariate testing, personalization, and feature-flag management allow teams to test complex ideas across various audience segments. This not only sparks new ideas but also improves conversion rates. For agencies like Growth-onomics, these tools shorten the learning curve, enhance performance, and drive measurable growth for businesses in the U.S.

How do methods like CUPED and sequential testing enhance the accuracy of A/B testing?

Advanced statistical techniques like CUPED (Controlled Experiment Using Pre-Experiment Data) and sequential testing offer powerful ways to enhance A/B testing accuracy and efficiency.

CUPED leverages data collected before the experiment starts. By reducing variance in the results, it helps ensure findings are more reliable. This approach can even make it possible to detect meaningful differences between test groups with smaller sample sizes – a big win when resources are limited.

Meanwhile, sequential testing allows for real-time data analysis during the experiment. Instead of waiting until the end, you can pinpoint statistically significant results sooner. This can save both time and resources while still maintaining the integrity of your findings.

Incorporating these methods into A/B testing can help businesses make smarter, faster decisions based on solid data.

What’s the best A/B testing tool for teams with limited technical skills?

For teams with limited technical skills, Zoho PageSense stands out as a solid option. Its visual editor is easy to navigate, and the dashboard is designed to be user-friendly. Marketers can set up, execute, and evaluate A/B tests without touching a single line of code. Thanks to its simplicity and budget-friendly pricing, it’s a popular pick for smaller teams or those just starting with A/B testing.

Another no-code alternative is VWO, which offers a hassle-free testing process along with built-in behavioral insights. That said, many users find its interface slightly less intuitive compared to Zoho PageSense.

For teams in the U.S. that prefer a drag-and-drop workflow, Zoho PageSense remains a top choice.