Secure real-time data integration is crucial for managing sensitive information like PII and PHI while meeting compliance standards such as SOC 2, HIPAA, GDPR, and CCPA. This article highlights seven leading tools – Talend, Informatica, Fivetran, Confluent Cloud, Snowflake, Cleo Integration Cloud, and Integrate.io – that combine real-time data processing with robust security measures.

Key Takeaways:

- Real-Time Performance: Tools like Fivetran and Confluent Cloud excel in handling high-volume, low-latency data streams.

- Security Features: Encryption (AES-256, TLS 1.2+), role-based access control, private networking (AWS PrivateLink, Azure Private Link), and column-level security are standard.

- Compliance: Certifications like SOC 2, HIPAA, and GDPR ensure these tools meet strict regulatory requirements.

- Governance: Features like data lineage, audit logs, and metadata tracking enhance transparency and control.

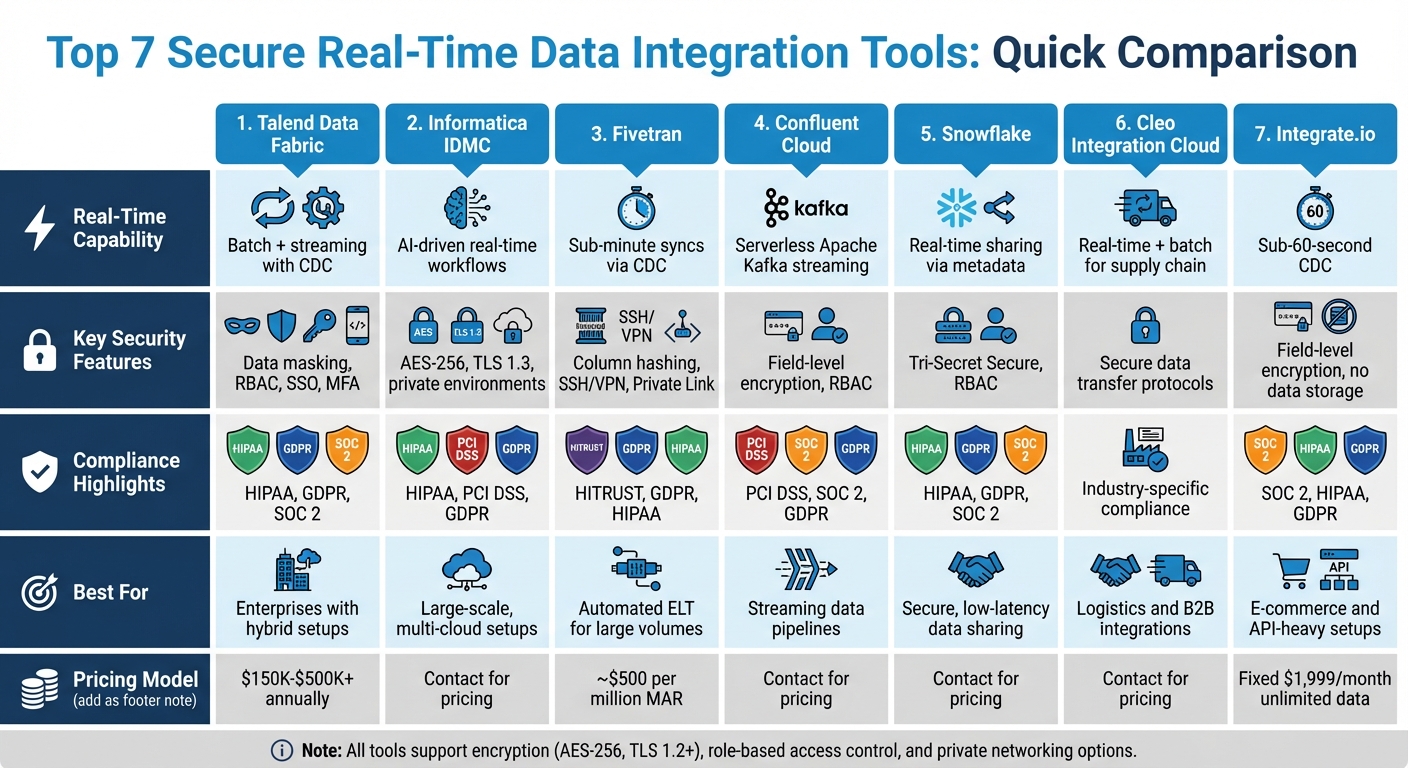

Quick Comparison:

| Tool | Real-Time Capability | Key Security Features | Compliance Highlights | Best For |

|---|---|---|---|---|

| Talend | Batch + streaming with CDC | Data masking, RBAC, SSO, MFA | HIPAA, GDPR, SOC 2 | Enterprises with hybrid setups |

| Informatica | AI-driven real-time workflows | AES-256, TLS 1.3, private environments | HIPAA, PCI DSS, GDPR | Large-scale, multi-cloud setups |

| Fivetran | Sub-minute syncs via CDC | Column hashing, SSH/VPN, Private Link | HITRUST, GDPR, HIPAA | Automated ELT for large volumes |

| Confluent Cloud | Serverless Apache Kafka streaming | Field-level encryption, RBAC | PCI DSS, SOC 2, GDPR | Streaming data pipelines |

| Snowflake | Real-time sharing via metadata | Tri-Secret Secure, RBAC | HIPAA, GDPR, SOC 2 | Secure, low-latency data sharing |

| Cleo | Real-time + batch for supply chain | Secure data transfer protocols | Industry-specific compliance | Logistics and B2B integrations |

| Integrate.io | Sub-60-second CDC | Field-level encryption, no data storage | SOC 2, HIPAA, GDPR | E-commerce and API-heavy setups |

These tools help businesses securely integrate and analyze data for tasks like demand forecasting, fraud detection, and real-time personalization. The right choice depends on your requirements for speed, compliance, and governance.

Secure Real-Time Data Integration Tools Comparison: Features, Compliance & Best Use Cases

DreamFactory: Accelerating Snowflake Data Integration With Real-Time APIs

How to Evaluate Secure Real-Time Integration Tools

When choosing secure integration tools, focus on four key areas: real-time performance, security controls, compliance, and governance. Each of these addresses specific risks and operational demands associated with managing sensitive data.

Real-time capabilities are crucial for ensuring smooth operations. Look for tools that can handle low latency, event streaming, and high-volume data throughput without breaking a sweat. Platforms that automatically manage schema drift are a big plus, as they help avoid disruptions in your data pipelines.

Security controls are the backbone of any integration tool. At a minimum, tools should feature end-to-end encryption – TLS 1.2+ for data in transit and AES-256 for data at rest. It’s even better if the platform supports customer-managed keys and generates ephemeral keys for added security. For an extra layer of protection, choose tools that offer column-level security, such as hashing or blocking sensitive data fields before they even reach your data warehouse.

Compliance support is more than just a checkbox. Don’t rely solely on vendor claims – verify that the tool undergoes annual SOC 1 and SOC 2 audits and holds certifications like ISO 27001, HIPAA BAA, and PCI DSS Level 1. Data residency controls are another critical feature, allowing you to choose specific geographic regions (e.g., the EU for GDPR compliance or US GovCloud for federal requirements) to meet local data sovereignty laws.

Governance features ensure you maintain visibility and control over your data. Tools with robust role-based access control (RBAC) and SCIM support for automated user provisioning are ideal. Immutable audit logs that capture every request – complete with timestamps, caller identity, and outcomes – offer a clear chain of custody for audits. Metadata lineage tracking adds another layer of transparency, showing the origins of your data and how it’s been transformed during integration.

Later in this article, you’ll find a comparison table summarizing how seven featured tools measure up against these criteria, helping you identify the best fit for your security and compliance needs.

Growth-onomics Data Strategy and Secure Integration Foundations

At Growth-onomics, secure real-time data integration is at the heart of their approach to demand forecasting and predictive growth modeling. Their data strategy follows a structured framework where data flows through an integration layer, ensuring it’s ready for analysis at every stage. This framework reflects a commitment to embedding security measures throughout the entire process.

The agency employs a hybrid model that separates orchestration from data handling. This means the data plane remains within the client’s secure network – whether housed in a VPC (Virtual Private Cloud) or an on-premises setup. By keeping sensitive data and credentials contained within the local environment, this approach strengthens the security measures mentioned earlier. This is particularly important when managing data from a wide variety of decentralized sources, such as SaaS platforms, IoT devices, and event streams, which are common in modern growth forecasting.

To move data securely without exposing it to public networks, Growth-onomics relies on private networking solutions like AWS PrivateLink, Azure Private Link, and Google Private Service Connect. This zero-trust architecture directly addresses a major concern for many organizations: 59% of companies report that data governance, security, or privacy is their top challenge when integrating private data with AI and machine learning systems.

The secure integration strategy at Growth-onomics is supported by tools like Talend, Informatica, Fivetran, and Snowflake. Talend and Informatica operate as the "Integrate" layer, offering native connectivity validated by Snowflake’s Ready Technology Validation Program. Snowflake acts as the secure backbone, enabling real-time data sharing with minimal latency and eliminating the need for traditional ETL processes. To further protect sensitive data during multi-source forecasting, data clean rooms are used. These allow multiple parties to collaborate and analyze data without exposing the underlying raw information, thanks to privacy-preserving configurations.

This multi-layered approach ensures Growth-onomics can deliver data-driven solutions across diverse areas like SEO, performance marketing, customer journey mapping, and analytics. At the same time, it upholds the data integrity and compliance standards that today’s businesses require. This robust strategy lays the groundwork for the secure integration tools discussed in the following sections.

1. Talend Data Fabric

Real-Time and Streaming Capabilities

Talend Data Fabric manages both batch and streaming data seamlessly, utilizing Change Data Capture (CDC) and integrating directly with tools like Apache Spark, Databricks, and Qubole. This allows for the creation of real-time data pipelines without the need for custom coding. It also supports containerized services and APIs, making it a powerful tool for real-time demand forecasting in highly secure environments.

Security Controls

Talend stands out as the first platform to offer private connections to AWS and Azure using PrivateLink. By isolating data endpoints from the public internet, Talend simplifies compliance with regulations while enhancing overall security. The platform includes advanced security tools such as built-in data masking and centralized Role-Based Access Control (RBAC). Additionally, it supports Single Sign-On (SSO) and Multi-Factor Authentication (MFA) through providers like Okta, OneLogin, PingFederate, and Microsoft Azure Active Directory – all without extra costs.

Compliance Support

Talend has earned multiple certifications, including SOC 2 Type 2, HIPAA (with Business Associate Agreements), GDPR, CCPA, ISO/IEC 27001:2013, and ISO/IEC 27701:2019. Impressively, it was the first integration provider to achieve ISO/IEC 27701:2019 certification, which extends its security management systems to include privacy protections. For GDPR compliance, Talend provides a Data Processing Addendum (DPA) as part of its Terms of Use, ensuring a legal framework for cross-border data transfers. Organizations managing healthcare data can also sign Business Associate Agreements (BAAs) with Talend to meet HIPAA requirements. These certifications demonstrate Talend’s commitment to secure, real-time data integration.

Governance Features

The Qlik Talend Trust Score™ offers a transparent way to monitor data quality by profiling datasets and tracking approvals through audit logs and stewardship dashboards. Task-based workflows assign specific responsibilities to data stewards, allowing them to flag issues and apply machine learning–powered recommendations to resolve inconsistencies.

2. Informatica Intelligent Data Management Cloud

Real-Time and Streaming Capabilities

The Informatica Intelligent Data Management Cloud (IDMC) offers real-time data integration through its API & App Integration services and AI-driven Master Data Management (MDM) capabilities. With support for over 50,000 metadata-aware connections, the platform empowers organizations to design automated workflows and deliver real-time personalization across hybrid and multi-cloud environments. For example, in 2025, deployments at Citizens Bank and Takeda achieved impressive results: 85% faster data onboarding, 99.95% uptime, and 96% cloud migration. These advancements streamlined critical operations while maintaining secure and scalable integration. Moving from these performance highlights, let’s explore how IDMC ensures data security throughout its lifecycle.

Security Controls

IDMC prioritizes security by encrypting all customer data at rest with AES-256 keys and safeguarding data in transit using TLS 1.2 or TLS 1.3 protocols. This ensures continuous encryption from the moment data leaves its source until it reaches its destination. Its multi-tenant architecture provides each customer with a dedicated "private" environment within the public cloud, ensuring complete data segregation. As described by Informatica’s Trust Center: "The Informatica Intelligent Data Management Cloud is built with performance, reliability, and security at its core to protect your most valuable asset". Additionally, users can manage encryption keys via command-line tools, and enabling TLS 1.3 is recommended for simpler, more secure cipher suites and enhanced performance.

Compliance Support

Informatica meets stringent global privacy standards, including Binding Corporate Rules (BCRs) approved by the European Data Protection Board, showcasing its commitment to secure international data transfers. For healthcare, the platform supports critical standards like HIPAA, HL7, and FHIR. Informatica also publishes a Transparency Report, which, as of February 24, 2025, confirmed the company had received no search warrants or national security requests for customer data since 2017. To bolster compliance further, regular audit reports on user privileges and permissions align with SOC 2 and HIPAA requirements. Beyond these measures, the platform’s governance features add another layer of protection.

Governance Features

IDMC’s Metadata System of Intelligence, powered by CLAIRE AI, delivers detailed data lineage and cataloging, helping users track data movement and transformations throughout their systems. The platform also provides audit trails to monitor user activity and privilege changes, which are essential for regulatory reporting and oversight. Additional tools like monitoring dashboards, alerts, and SLA management support both real-time and batch integrations. To enhance security, IDMC incorporates advanced authentication protocols, including SAML, LDAP, Kerberos, and Single Sign-On (SSO), alongside granular role-based access controls to enforce the principle of least privilege.

3. Fivetran

Real-Time and Streaming Capabilities

Fivetran offers high-performance data pipelines that sync data from over 700 sources – including SaaS tools, ERPs, and databases – directly to cloud destinations. By using log-based Change Data Capture (CDC), it captures inserts, updates, and deletes directly from database transaction logs. This method ensures high-volume replication with minimal delays. For manufacturers, this is a game-changer, as it allows seamless integration of ERP systems like SAP with supply chain data. The result? Better inventory management and the ability to respond immediately to supply chain disruptions.

The platform also features a "history mode", which keeps a complete record of all changes by storing them as new records. This makes it easier to analyze trends accurately. Currently, Fivetran syncs over 10.1 trillion rows of data each month, manages more than 22.2 million schema changes, and achieves historical sync speeds exceeding 500 GB per hour. A standout example comes from Pitney Bowes, which used Fivetran in 2025 to monitor over 800 million parcels in real time across 16 facilities. Vishal Shah, Data Architect Manager at Pitney Bowes, shared:

"When we introduced Fivetran to our facilities’ data processing, it revolutionized the flow, and we were able to achieve near real-time data from all 16 sites at the same time".

Next, let’s explore how Fivetran ensures the security of its data pipelines.

Security Controls

Beyond its impressive performance, Fivetran puts a strong emphasis on security. All data is encrypted both in transit and at rest using SSL/TLS 1.2+ and HTTPS protocols. Customers can also manage their own encryption keys through AWS, Azure, or Google. To enhance network security, Fivetran offers multiple options to bypass the public internet, such as AWS PrivateLink, Azure Private Link, Google Private Service Connect, VPN tunnels, and SSH tunnels.

For organizations with stringent security needs, Fivetran’s Hybrid Deployment model processes data within the company’s secure network while relying on Fivetran’s cloud for orchestration. The platform also supports column hashing, which anonymizes sensitive data fields but still allows them to be used in queries. Trenton Huey, Senior Manager of Data Engineering at Vida Health, remarked:

"Fivetran’s reputation spoke for itself. We knew it could move our large – and growing – volumes of data from all of our sources securely".

Compliance Support

When it comes to compliance, Fivetran checks all the boxes. It adheres to SOC 1 Type 2, SOC 2 Type 2, ISO 27001, ISO 27701, PCI DSS Level 1, HITRUST, HIPAA, GDPR, and CCPA standards. For healthcare organizations, the platform offers Business Associate Agreements (BAAs) and HITRUST certification under its Business Critical plan. To meet GDPR and CCPA requirements, Fivetran provides Regional Data Processing Agreements (DPAs) and allows users to choose specific regions for data processing.

National Australia Bank (NAB) demonstrated the platform’s capabilities in 2025 by using Fivetran to enhance real-time personalization and detect financial crimes. This implementation led to a 50% reduction in ingestion costs and a 30% improvement in machine learning performance. Joanna Gurry, Executive of Data Platforms at NAB, explained:

"Our strategies for supporting financial crime detection and real-time personalization, including the adoption of generative AI, require real-time data. Fivetran enables us to provide the best customer experience with fresh, reliable and compliant data".

Governance Features

Fivetran also strengthens its data management with robust governance tools. It offers Role-Based Access Control (RBAC) and supports automated user provisioning through SCIM integration with platforms like Okta and Microsoft Entra ID. The Fivetran Platform Connector enables centralized management of metadata and data lineage by syncing metadata and audit logs to a destination or internal catalog. This makes it easy for organizations to trace the origin, access history, and transformations of their data – an essential feature for audits and compliance checks. Additionally, column blocking and hashing functionalities help anonymize personally identifiable information (PII) while ensuring the data remains useful for analysis.

4. Confluent Cloud for Apache Kafka

Real-Time Streaming Capabilities

Confluent Cloud stands out with its real-time streaming features, designed to simplify secure data integration. Powered by a cloud-native engine, it offers a serverless Apache Kafka experience with automatic scaling. Users can take advantage of 120+ pre-built connectors and 80+ fully managed Kafka connectors to streamline their workflows. For processing data streams, the platform integrates Apache Flink, enabling teams to analyze and transform data as it moves through the system. With a 99.99% uptime SLA for multi-availability zone clusters, Confluent Cloud not only ensures reliability but also helps reduce the cost of managing Kafka by as much as 60%.

Take Citizens Bank, for example – they saved $1 million annually in IT costs while improving processing speeds by 50%. Similarly, BigCommerce automated maintenance tasks, saving 20 hours each week.

Security Controls

Security is a core focus of Confluent Cloud. All network traffic is encrypted using TLS 1.2, and data at rest is secured with encryption, offering the option for Bring Your Own Key (BYOK) across AWS, Azure, and Google Cloud. For organizations with heightened security needs, the platform provides Client-Side Field Level Encryption (CSFLE) to protect specific data fields and Client-Side Payload Encryption (CSPE) for full message encryption.

To enhance network security, Confluent Cloud supports VPC/VNet peering and private connectivity options like AWS PrivateLink, Azure Private Link, and Google Cloud Private Service Connect. Authentication methods include SAML/SSO, OAuth/OIDC, and mutual TLS (mTLS), while authorization is handled through Role-Based Access Control (RBAC) and Access Control Lists (ACLs) for topics and consumer groups.

Compliance Support

Confluent Cloud is built to meet rigorous compliance standards, supporting certifications such as SOC 1, SOC 2, SOC 3, ISO 27001, PCI DSS, HITRUST CSF, and ensuring readiness for GDPR and CCPA. Financial institutions use it to handle Cardholder Data Environment (CDE) events, ensuring compliance with PCI DSS and SOX. Real-time processing jobs can scan these streams for anomalies, like unauthorized changes to financial data, and alert security teams instantly. In healthcare, Kafka Connect is used to pull audit logs from Electronic Health Record (EHR) systems, with Flink SQL monitoring for HIPAA violations, such as unauthorized access to patient records.

Governance Features

Confluent Cloud’s governance tools are built around three core components: Stream Catalog for data discovery and classification, Stream Lineage for tracking data movement, and Stream Quality for enforcing schemas. Audit logs, stored for seven days, record every user action, administrative task, and system access. These logs can be exported to external security tools like Splunk or Elastic using the confluent-audit-log-events topic.

The Schema Registry ensures data consistency by enforcing standards across formats like Avro, JSON, or Protobuf. It also supports Data Contracts, which formalize agreements between data producers and consumers to maintain structure and integrity.

This suite of features strengthens Confluent Cloud’s role in enabling secure, real-time data integration, making it a valuable tool for tasks like accurate demand forecasting.

sbb-itb-2ec70df

5. Snowflake Secure Data Sharing

Real-Time Streaming Capabilities

Snowflake takes a different approach to data sharing by eliminating the need for data duplication. Using a metadata-based sharing model, any updates made by providers – whether adding new objects, updating existing ones, or modifying rows – are immediately accessible to consumers. This means there’s no waiting around for traditional ETL processes to catch up.

With Dynamic Tables, incremental processing becomes more straightforward. Consumers can also create streams on shared tables or secure views to track data manipulation changes (DML), which is particularly helpful for building reactive data pipelines. To enable this functionality, providers must activate CHANGE_TRACKING on shared tables. This allows consumers to monitor changes in real time, creating a seamless flow of up-to-date information. These features not only streamline workflows but also align with Snowflake’s focus on secure and compliant data sharing.

Security Controls

Snowflake ensures data security by enforcing strict read-only access for consumers, which means they cannot modify or delete the provider’s data. Providers maintain full control over what’s shared by using Role-Based Access Control (RBAC). This allows them to assign specific privileges at the database, schema, or table level. For organizations dealing with sensitive information, Snowflake offers Business Critical accounts, which include Tri-Secret Secure. This feature combines provider-managed and Snowflake-managed encryption keys to safeguard data access. Additionally, the Virtual Private Snowflake edition enhances security with network isolation.

Compliance Support

Snowflake adheres to major compliance standards like SOC 2, HIPAA, GDPR, and CCPA. Its Business Critical and Virtual Private Snowflake editions are tailored for industries with strict regulatory requirements, such as healthcare and finance. By avoiding data movement, Snowflake helps maintain data sovereignty and reduces the risk of exposure. Importantly, Snowflake enforces compliance boundaries, such as preventing HIPAA accounts from sharing data with non-HIPAA accounts, ensuring regulatory requirements are always met.

Governance Features

Snowflake offers robust governance tools to help providers maintain control and visibility over shared data. Providers can tag shared objects to give consumers context about data sensitivity and origin. All sharing activities are logged, and providers can revoke access to specific shares or objects whenever necessary. For added transparency, the Data Sharing Usage schema provides metrics on how consumers interact with the shared data. From a cost perspective, consumers only pay for the compute resources used to query shared data, while providers handle the storage costs.

6. Cleo Integration Cloud

Cleo Integration Cloud stands out as a powerful tool for secure data integration, specifically designed for the supply chain and logistics sectors. This platform emphasizes API support and "just-in-time" (JIT) integrations, enabling instant data exchanges alongside traditional batch processing methods. By bridging these systems, Cleo ensures applications can share and process data in real time.

Its real-time functionalities shine in areas like inventory updates, order validation, and delivery management. The platform also offers real-time monitoring dashboards, giving businesses a clear view of their processes. With over 4,000 customers and an impressive 95% renewal rate, Cleo has proven its reliability in managing intricate B2B data exchanges. This makes it an essential part of a secure, real-time integration framework for tasks like demand forecasting.

Security Controls

Cleo Integration Cloud goes beyond real-time capabilities by supporting over 20 secure data transfer protocols, such as AS2 and SFTP. It uses lightweight agents to securely connect cloud-based and on-premises systems, including WMS, TMS, and ERP solutions. Additionally, disaster recovery features come built into the platform, ensuring business continuity in case of unexpected disruptions.

Compliance and Governance

Designed to meet the high-security and compliance demands of complex supply chains, Cleo Integration Cloud offers end-to-end transaction tracking and managed file transfer (MFT) governance. These tools provide full visibility into data movements, helping teams monitor and meet SLAs consistently. The platform’s unified approach to EDI, APIs, and file-based workflows allows businesses to manage both modern and legacy integration processes securely from one centralized location. With its comprehensive monitoring capabilities, Cleo ensures that businesses remain compliant while streamlining their operations.

7. Integrate.io

Integrate.io offers lightning-fast Change Data Capture (CDC), reducing data processing times from 15-minute batch windows to under 60 seconds. This allows for continuous synchronization, making it ideal for real-time analytics and operational dashboards. Major enterprises like Samsung, Philips, and Caterpillar trust Integrate.io for their critical data integration needs, thanks to its proven reliability and robust security measures.

The platform’s pricing starts at a fixed $1,999 per month, covering unlimited data volumes. This pricing model can save users between 34% and 71% compared to consumption-based alternatives. With over 200 pre-built connectors and 220+ transformation templates, Integrate.io simplifies integration workflows while maintaining enterprise-level security. Let’s dive into the platform’s security, compliance, and governance features.

Security Controls

Integrate.io takes data protection seriously, employing advanced encryption and isolation techniques to secure sensitive information. One standout feature is its integration with Amazon KMS for Field-Level Encryption (FLE), which ensures data is encrypted before it even leaves your network. What’s more, decryption keys remain solely in your control, giving you full authority over your data.

The platform enhances security further by avoiding persistent data processing – it doesn’t store records, copies, or logs of your data. Secure connections are established using SSH and reverse SSH tunnels, eliminating the need to expose vulnerable ports. Data in transit is safeguarded with SSL/TLS encryption, while sensitive data at rest is protected using industry-standard algorithms. Additional security measures, such as data masking, hashing, and obfuscation, help protect personally identifiable information during ETL processes.

Compliance Support

Integrate.io meets rigorous compliance standards, holding SOC 2 certification and undergoing annual third-party penetration testing. The platform complies with major regulations, including HIPAA (with Business Associate Agreements), GDPR (with a Data Processing Addendum), and CCPA. Its infrastructure is hosted on AWS data centers, which are certified under ISO 27001, SOC 1/2, and PCI Level 1 standards.

Governance Features

For organizations seeking transparency and control, Integrate.io offers built-in data lineage tracking and metadata cataloging, ensuring full visibility into data transformations and origins. Audit logs can be exported to your SIEM system for centralized monitoring and reporting. The platform also includes role-based access control (RBAC) with project-specific permissions, two-factor authentication (2FA), and SSO/SAML integration. Real-time pipeline health monitoring ensures you can keep an eye on your workflows without interruption.

"Being able to pull that into a central location and summarize it in a way that is understandable by our customers is critical to us."

– Gerald Heath, VP Technology, Gallus Golf

Comparison Table

When evaluating data integration tools, it’s essential to consider factors like pricing, real-time performance, security, compliance, and their ideal use cases. Here’s a breakdown of some popular platforms:

- Fivetran: Uses a usage-based pricing model tied to Monthly Active Rows (MAR), with the Standard plan starting at around $500 per million MAR.

- Talend Data Fabric: Targets enterprise-level users, with costs ranging from $150,000 to over $500,000 annually.

- Integrate.io: Offers a fixed-fee pricing structure starting at $1,999 per month, allowing for unlimited data volumes.

The table below provides a side-by-side comparison of their key features:

| Tool | Real-Time Capability | Key Security Features | Compliance Highlights | Ideal Use Case |

|---|---|---|---|---|

| Talend Data Fabric | Batch and streaming pipelines with built-in CDC and Spark support | SOC 2 and HIPAA compliance, with integrated governance and risk management tools | HIPAA, GDPR, and other industry-specific standards | Regulated enterprises needing strong data quality controls and hybrid deployment options |

| Fivetran | Sync frequencies as fast as 1 minute (Enterprise plan) | Customer-managed keys, column hashing, Private Link, and SSH/VPN tunnels | PCI DSS Level 1, HITRUST, GDPR, HIPAA (BAA), SOC 1/2, ISO 27001 | Automated ELT for data-intensive enterprises or SaaS/ERP consolidation needs |

| Integrate.io | Sub-60-second CDC with continuous synchronization | Field-level encryption (AWS KMS) | – | E-commerce and environments relying heavily on REST APIs |

These distinctions highlight the benefits in real-time integration capabilities. For instance, Cemex leveraged Fivetran in 2025 to connect over 1,800 facilities, cutting SAP data delivery times from days to just minutes. Similarly, JetBlue used Fivetran to establish data pipelines in under two minutes – an effort that previously took weeks or even months. These real-world examples emphasize how the right tool can transform operational efficiency.

How to Choose the Right Secure Integration Stack for Demand Forecasting

When it comes to demand forecasting, the speed of data ingestion needs to align with your business goals. For tasks like fraud detection or instant retail personalization, real-time ingestion with sub-second latency is a must. On the other hand, batch processing – done hourly or daily – works perfectly for analyzing historical trends and can help cut down on compute costs. If you’re focusing on near-live inventory tracking or adjusting marketing campaigns, streaming ingestion with a latency of 1–3 minutes strikes the right balance. But speed isn’t the only factor – governance is just as important, especially when sensitive data is involved.

Governance and Tool Selection

Governance should be a top priority when choosing tools, especially if you’re dealing with regulated data like KYC verification or HIPAA-protected health records. In such cases, centralized ingestion is essential for maintaining audit trails and canonical modeling. Look for tools that offer features like Role-Based Access Control (RBAC), centralized secrets management (using tools like HashiCorp Vault or AWS Secrets Manager), and immutable audit logs to ensure a secure chain of custody. For industries with strict regulations, certifications such as HITRUST and PCI DSS Level 1 are non-negotiable.

A common analytics stack for demand forecasting might include Fivetran or Airbyte for ingestion, Snowflake or Databricks for storage, dbt for data transformation, and Tableau or Looker for visualization. It’s also critical to ensure your integration tools support automated schema updates, as this prevents disruptions in your forecasting workflows. For example, a regional hospital used Airbyte Enterprise Flex to maintain HIPAA compliance by keeping Electronic Protected Health Information (ePHI) on-premises within a private VPC, while orchestrating operations via a cloud control plane. This setup ensured sensitive data never passed through the public internet, enabling secure and timely demand forecasting.

Integration Patterns Matter

Beyond tool selection, the choice of integration pattern plays a pivotal role. A hybrid approach often works best: centralize high-value "golden records" for governance while using Zero Copy federation for transient datasets. This strategy minimizes storage costs while keeping data fresh. Zero Copy is particularly useful for exploratory analysis on large-scale datasets, where duplicating data would be too expensive. For instance, Square leveraged Fivetran to integrate global marketing data, enabling fast and secure analytics.

Here’s a quick breakdown of integration patterns and their roles in demand forecasting:

| Integration Pattern | Latency Profile | Governance Strength | Typical Role in Forecasting Stack |

|---|---|---|---|

| Real-Time Ingestion | Sub-second | High (Centralized) | Immediate demand signals, fraud detection |

| Streaming Ingestion | 1–3 Minutes | High (Centralized) | Near-live inventory tracking, campaign triggers |

| Batch Ingestion | Hourly/Daily | High (Centralized) | Historical baseline models, regulatory reporting |

| Zero Copy (Live Query) | Real-time (Source-dependent) | Decentralized (Source-level) | Ad-hoc exploratory analysis, live dashboards |

| Accelerated Query (Cache) | 15 min – 7 days | Moderate | Frequent BI reporting, engagement metrics |

Conclusion

Secure, real-time data integration is a game-changer for U.S. growth and marketing teams aiming to stay competitive. When your team can act in minutes instead of days, you gain a powerful edge in areas like demand forecasting and customer personalization. The tools highlighted in this guide take care of the heavy lifting – safely moving data, managing millions of schema changes, and processing trillions of data rows. This ensures your most critical data remains both protected and accessible.

A well-designed integration stack not only safeguards sensitive marketing data but also ensures it’s readily available for analysis. Industry leaders show that balancing security with speed sets high-performing analytics teams apart from those bogged down by manual workflows.

When selecting tools for your organization, start by addressing compliance requirements. Whether it’s CCPA for consumer data, PCI DSS Level 1 for payment information, or HIPAA for healthcare marketing, compliance should guide your decisions. Next, consider your latency needs – do you require sub-second real-time processing for fraud detection, or is hourly batch processing sufficient for analyzing historical trends? Finally, ensure the platform supports key security features like column masking, RBAC, and end-to-end encryption.

The shift toward real-time integration is gaining momentum. For example, National Australia Bank saw a 30% improvement in machine learning performance after implementing real-time data pipelines, while Cemex reduced their time to insights from days to minutes across more than 1,800 global facilities. These outcomes set the benchmark for data-driven growth teams.

As discussed earlier, automation and uptime are critical. Your integration stack should simplify your workflows, not complicate them. Look for tools that handle schema drift automatically, promise 99.9% uptime, and offer flexible deployment options that fit your security needs. Investing in secure, automated integration frees your team to focus on what truly matters – turning data into actionable insights and strategies, rather than managing it.

FAQs

What should I look for in a secure real-time data integration tool?

When choosing a secure real-time data integration tool, it’s crucial to focus on security and compliance. Make sure the platform adheres to industry standards like SOC 1 Type 2 and SOC 2 Type 2. If your organization operates under specific regulations, check for certifications such as HIPAA or GDPR to ensure the tool aligns with legal requirements.

Pay close attention to the encryption protocols the tool uses. Look for TLS encryption to secure data in transit and AES-256 encryption for protecting data at rest. If the platform offers customer-managed encryption keys, that’s a bonus – it gives you more control over your data’s security. Additional features like data masking or anonymization can provide an extra layer of protection for sensitive information.

Lastly, assess the tool’s network and access controls. Secure connection options, such as VPNs or private-link services, are essential for keeping data traffic off public networks. Strong identity management features, including multi-factor authentication and role-based access control, are critical. Combine these with detailed audit logs to ensure both security and traceability. By focusing on these elements, you can select a tool that not only protects your data but also meets U.S.-specific compliance standards.

How do real-time data integration tools ensure compliance with regulations like GDPR and HIPAA?

Real-time data integration tools help businesses stay aligned with regulations like GDPR and HIPAA by building privacy and security safeguards directly into their workflows. These tools can classify incoming data, flag sensitive fields such as personal or health information, and apply measures like encryption or tokenization before the data is stored or transmitted. This ensures that sensitive information remains protected at all times.

In addition to securing data, these platforms streamline compliance by automating tasks like managing consent, responding to data subject requests, and enforcing policies in real time. Features such as role-based access controls, audit logs, and real-time monitoring add an extra layer of transparency, enabling organizations to quickly spot and address potential breaches. By embedding regulatory checks into every step of the data pipeline, these tools transform compliance from a manual, periodic task into an ongoing, automated process.

What security features should I prioritize in real-time data integration tools to protect sensitive information?

When choosing a real-time data integration tool, it’s essential to prioritize data protection, access controls, and compliance to keep sensitive information secure.

Data protection involves using robust encryption methods. Look for tools that support TLS for data in transit and AES-256 for data at rest. Features like customer-managed encryption keys and options to mask or suppress sensitive data fields add an extra layer of security. Secure networking capabilities, such as VPNs or private connections, help minimize exposure to the public internet.

For access controls, opt for platforms that follow zero-trust principles. This includes multi-factor authentication, role-based access control (RBAC), and centralized credential management. Tools with immutable audit logs allow you to track all data activity, which is crucial for security and compliance reviews.

Finally, make sure the tool aligns with key compliance standards like SOC 2, ISO 27001, HIPAA, or GDPR. Regular security audits, vulnerability scans, and penetration tests show the provider’s dedication to safeguarding data. These elements together establish a strong foundation for a secure real-time data integration solution.