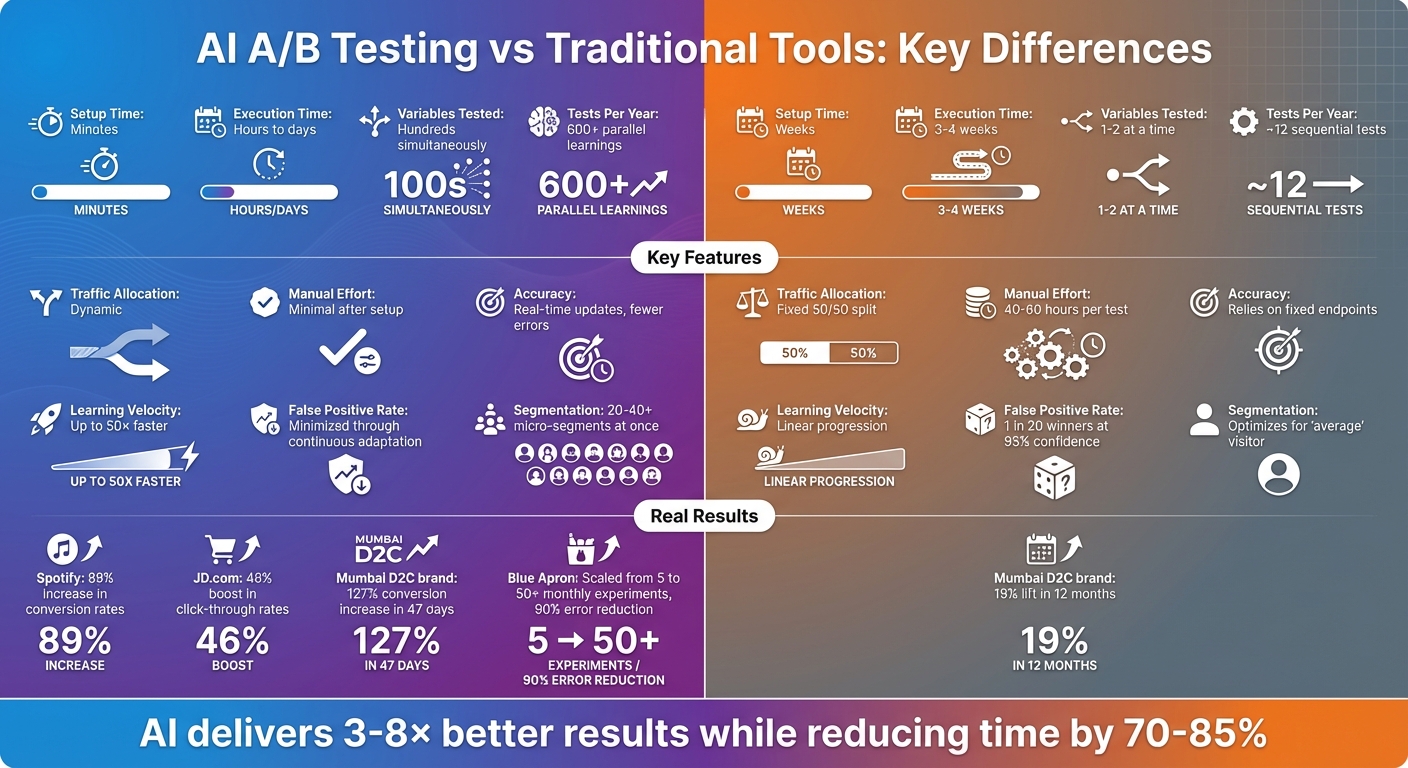

AI A/B testing uses machine learning to analyze data, adjust traffic dynamically, and test multiple variables at once, delivering faster, more precise results. Traditional A/B testing, on the other hand, involves manual configurations, fixed traffic splits, and longer timelines to gather statistically reliable insights.

Key Highlights:

- AI testing speeds up results from weeks to hours or days.

- AI dynamically allocates traffic to better-performing versions, unlike fixed splits in traditional methods.

- AI handles complex tests with hundreds of variables, while traditional tools focus on one or two at a time.

- AI reduces manual effort, freeing teams to focus on strategy.

Quick Comparison:

| Feature | AI A/B Testing | Traditional A/B Testing |

|---|---|---|

| Setup Time | Minutes | Weeks |

| Execution Time | Hours to days | 3–4 weeks |

| Traffic Allocation | Dynamic | Fixed |

| Variables Tested | Hundreds simultaneously | 1–2 at a time |

| Manual Effort | Minimal after setup | High |

| Accuracy | Real-time updates, fewer errors | Relies on fixed endpoints |

AI-powered platforms are transforming how businesses optimize performance, offering faster insights and higher efficiency compared to traditional methods.

AI vs Traditional A/B Testing: Speed, Efficiency and Accuracy Comparison

A/B Testing with AI: Automate & Optimize Your Experiments 🚀

sbb-itb-2ec70df

How the Methods Work Differently

AI-driven and traditional A/B testing differ not just in speed but also in how decisions are made – manual versus automated. This distinction shapes every phase of the testing process. While traditional methods rely heavily on human oversight from start to finish, AI platforms automate much of the tactical work, allowing teams to focus more on strategy. This fundamental shift creates a clear contrast in how tests are set up and executed.

Setup and Test Execution

Traditional A/B testing typically begins with a manual brainstorming phase that can drag on for weeks. Teams audit data, analyze user behavior, and develop hypotheses based on intuition or past experiences. Afterward, they manually configure the test – defining the audience, setting fixed traffic splits (usually 50/50), and testing one variable at a time to maintain statistical accuracy.

AI platforms flip this process on its head. They automatically analyze website metrics and user behavior, generating data-backed hypotheses in mere minutes. Instead of focusing on a single variable, AI systems can test hundreds of variations simultaneously across different user groups, uncovering interactions that traditional methods might miss. For instance, in late 2024, a Bangalore-based electronics company estimated it would need 24 weeks to test eight homepage elements sequentially using traditional tools. By adopting an AI-driven approach, they tested all elements and their interactions in just 18 days, achieving a 94% lift in conversions compared to the projected 25% from manual testing.

Traditional testing also demands significant time and expertise – around 40 to 60 hours of skilled human effort per test for analysis, design, and implementation. AI platforms eliminate much of this workload, freeing teams to focus on broader strategic goals.

Once the tests are live, the way traffic is managed highlights another key difference between these approaches.

Traffic Allocation and Adjustments

Traditional tools stick to fixed traffic allocations throughout the test. For example, if 50% of visitors see version A and 50% see version B, those ratios remain unchanged until the test ends. This rigidity means that underperforming versions continue to receive traffic, even when early results suggest they’re not working, potentially leading to lost conversions.

AI platforms take a smarter approach, using Multi-Armed Bandit (MAB) algorithms to dynamically adjust traffic in real time. As data comes in, the system shifts more traffic toward better-performing variations while reducing exposure to weaker ones. This method balances "exploration" (testing to find the best option) with "exploitation" (maximizing the impact of the winner). The result? Fewer visitors see ineffective versions, reducing conversion risks and speeding up decision-making.

Real-Time Data Processing

Traditional methods rely on a wait-and-see approach, where results are analyzed only after the test concludes. If early findings suggest changes are needed, teams often have to stop the test, reconfigure it, and start over – wasting time and resources.

AI platforms, on the other hand, process data in real time, updating probabilities and adjusting parameters as the test progresses. By using Bayesian inference, these systems provide continuously updated probability distributions rather than waiting for a final "winner or loser" verdict. This real-time adaptability allows AI-driven tests to respond to unexpected changes, like traffic surges or seasonal trends. For example, in early 2025, a Mumbai-based D2C beauty brand tested 127 variations simultaneously using an AI platform. In just 47 days, their conversion rate jumped from 1.4% to 3.18% – a 127% increase. In comparison, a similar brand using traditional methods took 12 months and 47 sequential tests to achieve only a 19% lift.

"Since we build rapid prototypes quite often, using AI has helped us code A/B tests faster and without bugs. We’re able to produce rapid prototypes quickly, increasing our testing volume and rapidly validating hypotheses." – Jon MacDonald, CEO, The Good

Speed and Efficiency Comparison

Traditional testing methods often take 3–4 weeks per test to reach the required sample size for statistical reliability, even when early results suggest a clear winner. This step-by-step approach, which evaluates one element at a time, can quickly become a lengthy process when multiple variables need to be tested.

Execution Time

AI platforms drastically shorten testing timelines, delivering results in hours or days. Multi-Armed Bandit algorithms, for example, can cut runtimes by up to 40%, making these platforms especially useful for campaigns with tight deadlines. Unlike traditional methods, these systems use sequential analysis to identify meaningful differences as soon as they emerge, eliminating the need to wait for a fixed endpoint.

Take Blue Apron as an example. In 2025, the company scaled its testing from 5 to over 50 monthly experiments using Optimizely‘s AI-powered platform, while also reducing errors by 90%. This improvement not only sped up individual tests but also enabled the company to run multiple experiments simultaneously, all without increasing team size or workload. The result? Faster execution and reduced manual effort.

Resource Requirements

Traditional A/B testing demands heavy manual involvement. Teams often dedicate up to 80% of their time maintaining existing tests – like updating scripts or resolving tracking issues – leaving only about 10% of their time for designing new experiments.

AI platforms flip this dynamic. While the initial setup may require tasks like data preparation and model configuration, these systems largely handle routine tasks autonomously once operational. For example, automated hypothesis generation can shrink the pre-test phase from 3 days to just 4 hours, and self-healing technology ensures tests stay up-to-date with 95% accuracy. This autonomy allows teams to focus on high-level strategies, such as targeting key customer segments or refining messaging, instead of getting bogged down in technical upkeep.

"I use [AI] to process data more efficiently, automate repetitive tasks, and be a more concise communicator. I embrace it for the doing aspects of my job but never for the thinking aspects."

- Tracy Laranjo, CRO Strategist

Additionally, traditional testing often requires hiring specialized Software Development Engineers in Test (SDETs), who earn 80% more than manual testers. AI platforms can reduce or even eliminate this need, enabling smaller teams to achieve testing speeds and capacity typically only seen at enterprise levels.

Comparison Table: Speed and Efficiency

| Factor | Traditional Tools | AI-Driven Platforms |

|---|---|---|

| Time to Results | 3–4 weeks per test | Hours to days |

| Tests Per Year | ~12 sequential tests | 600+ parallel learnings |

| Manual Effort Per Test | 40–60 hours | Minimal post-setup |

| Maintenance Burden | 80% of total effort | Near zero with self-healing |

| Learning Velocity | Linear progression | Up to 50× faster |

| Testing Capacity | 1–2 variables at a time | Hundreds of variables simultaneously |

Accuracy and Scalability Differences

When using traditional A/B testing at a 95% confidence level, there’s a risk of one false positive for every 20 "winning" variants, mistakenly identified as successful. On top of that, 41% of these so-called winners tend to regress within 90 days. AI platforms address these issues with continuous adaptation and Bayesian optimization, which adjust dynamically as new data arrives instead of relying on fixed endpoints. These capabilities highlight AI’s edge in both accuracy and scalability.

Pattern Recognition and Anomaly Detection

AI systems excel at detecting patterns and anomalies in real time. They constantly monitor data streams, flagging unexpected changes like system glitches, sudden spikes in traffic, or unusual user behavior that could compromise results. This kind of automated oversight is especially valuable during off-hours or when managing multiple simultaneous tests. Additionally, machine learning models handle data cleaning automatically, eliminating duplicates and inconsistencies – tasks that would otherwise require manual intervention.

Another standout feature is interaction detection. Traditional A/B tests often evaluate elements in isolation, which means they can miss effective combinations of variables. AI, on the other hand, tests all possible combinations at once, uncovering synergies that manual methods overlook. It also automates micro-segmentation, tailoring experiences for 20–40 distinct visitor groups based on factors like location, device, or behavior. This level of granularity is simply unachievable with manual testing due to sample size limitations.

Handling Complex Tests

AI platforms are particularly adept at managing complex tests with numerous variables. For instance, Spotify leveraged AI-driven automation via Smartly.io to test over 2,400 creative variations across 30 campaigns, leading to an 89% increase in conversion rates and a 34% reduction in cost per acquisition. Traditional tools, by contrast, are typically limited to testing just 1–2 variables at a time.

Similarly, JD.com used a "Comparison Lift" bandit-based system to run over 1,500 campaigns, achieving an average 46% boost in click-through rates and 27% more clicks compared to static A/B testing. Toyota France also saw impressive results, reporting a 97% increase in leads after using Kameleoon‘s Predictive Targeting AI to pinpoint and engage high-intent visitors. These examples underscore how AI platforms can handle complexities that would overwhelm traditional methods, enabling marketers to test and optimize hundreds or even thousands of variations simultaneously.

Comparison Table: Accuracy and Scalability

| Factor | Traditional Tools | AI-Driven Platforms |

|---|---|---|

| False Positive Rate | 1 in 20 winners at 95% confidence | Minimized through continuous adaptation |

| Anomaly Detection | Manual review required | 24/7 automated monitoring |

| Interaction Testing | Tests elements in isolation | Tests all combinations simultaneously |

| Segmentation Capacity | Optimizes for "average" visitor | 20–40+ micro-segments at once |

| Complexity Handling | 1–2 variables per test | Thousands of variations in parallel |

| Winner Regression Rate | 41% regress within 90 days | Reduced through real-time adaptation |

How Growth-onomics Uses AI A/B Testing

Growth-onomics’ AI Testing Approach

Growth-onomics has made AI-driven A/B testing a cornerstone of its marketing strategy, using it to fine-tune digital campaigns with remarkable speed and precision. Instead of relying on traditional brainstorming sessions, the agency leverages AI to quickly generate a wide array of testable variations. This process allows for faster identification of customer pain points and persuasion techniques that might go unnoticed by human teams.

By blending machine learning with human expertise, Growth-onomics can test up to 20 landing page variations in just one afternoon – a process that used to take weeks. This streamlined method is powered by multi-armed bandit algorithms, which dynamically adjust traffic allocation to the best-performing variations. The result? Faster insights and improved conversion rates across campaigns focused on Performance Marketing, SEO, and UX optimization.

The agency’s AI tools also excel at predicting which creative elements will succeed, boasting an accuracy rate of over 90%. Additionally, these tools can identify ad fatigue early, ensuring timely updates that keep campaigns fresh and effective. In fact, AI-driven copywriting has delivered a 40.3% boost in conversion rates compared to traditional methods.

This approach isn’t just about efficiency – it’s about delivering measurable results for businesses of all sizes.

Benefits for Small and Medium Businesses

Growth-onomics’ advanced testing methods offer particular advantages for small and medium businesses (SMBs), helping them compete with larger enterprises. By minimizing the costs of integrating and analyzing AI tools, the agency makes cutting-edge optimization accessible to smaller players. SMBs using these AI-powered strategies have reported an average revenue increase of 34% and cost savings of 38%.

The agency focuses on automating repetitive, time-consuming tasks like customer service triage and marketing copy creation, areas where SMBs often face challenges. Growth-onomics also eliminates much of the human bias that can skew traditional test results – an issue that affects 71% of marketers. By running at least one high-impact experiment every two weeks, the agency ensures clients maintain a steady flow of actionable insights. This systematic approach helps SMBs avoid costly testing mistakes, which can lead to a 10% loss in potential revenue.

With these AI-driven tools and strategies, Growth-onomics empowers SMBs to achieve meaningful growth without the heavy resource demands typically associated with such innovations.

Conclusion

Summary of AI vs Traditional Tools

The gap between AI-driven and traditional A/B testing lies in speed, intelligence, and scale. Traditional tools rely on manually created hypotheses, fixed 50/50 traffic splits, and often take weeks to gather meaningful results. On the other hand, AI leverages machine learning to analyze thousands of user sessions, dynamically adjusts traffic toward better-performing variants using Multi-Armed Bandit algorithms, and delivers actionable insights within days instead of months.

Traditional methods typically test one or two variants at a time, while AI evaluates hundreds of combinations simultaneously. This approach uncovers interaction effects that would otherwise remain hidden. The impact is clear: AI-powered optimization can deliver results that are 3–8× better while reducing the time required by 70–85%. For example, JD.com increased its click-through rate by 46%, and Spotify achieved an impressive 89% boost in conversion rates.

Another key difference is how traffic is managed. Traditional testing often wastes resources on underperforming variants, while AI continuously reallocates traffic toward top-performing options – recovering revenue that might otherwise be lost. Instead of relying on binary win/loss outcomes, AI uses Bayesian inference to provide ongoing probability updates, allowing for faster and more informed decisions.

These advancements highlight a future where optimization is no longer a periodic task but an ongoing, autonomous process.

The Future of A/B Testing

The trajectory of A/B testing is moving toward autonomous optimization, where websites evolve continuously rather than waiting for scheduled redesigns.

"Instead of waiting weeks for test results, we’re seeing real-time results that we’re continuously fine-tuning. Compared to traditional digital marketing campaigns, they generate double-digit increases for us in consumer engagement". – Joe Park, CTO of Yum Brands

This shift isn’t just for large enterprises. Platforms like Growth-onomics are making advanced AI testing accessible to small and medium businesses without the need for a $200,000+ annual budget. By focusing on high-quality data, maintaining a consistent testing rhythm, and using AI to automate repetitive tasks, companies can achieve a faster pace of insights – setting apart market leaders from the rest.

The real question isn’t whether AI testing should be adopted, but how quickly businesses can integrate it to unlock measurable growth opportunities.

FAQs

When should I choose AI A/B testing over traditional A/B testing?

AI-driven A/B testing shines when you’re tackling large-scale, complex experiments involving multiple variables. These platforms excel at providing quick insights and enabling real-time optimization. They process data faster, adjust dynamically, and manage numerous variations simultaneously – perfect for scaling campaigns efficiently.

On the other hand, traditional A/B testing is your go-to for simpler experiments with fewer variables. It’s ideal when you prefer fixed durations and prioritize maintaining complete control and transparency throughout the process. Each method has its strengths, depending on the complexity and goals of your testing.

How much website traffic do I need for AI-driven testing to work well?

AI-driven testing tends to perform optimally when a website receives 10,000 to 100,000 monthly visitors or more. This level of traffic provides enough data to generate reliable and statistically meaningful results. While it’s still possible to conduct tests with lower traffic, the process may take longer to collect sufficient data for actionable insights.

How do I ensure AI A/B test results are reliable and not just temporary wins?

To make sure your AI A/B test results are dependable, it’s crucial to establish statistical significance. This helps filter out random noise and ensures the results are meaningful. Use appropriate statistical methods and ensure your sample size is large enough to draw accurate conclusions. Always analyze the data within its context, considering factors like audience behavior or external influences.

Consistency matters, too. Regularly review your results and run follow-up tests to confirm their stability over time. Avoid making decisions based on short-term trends or data that doesn’t represent your target audience. This approach helps you make informed, data-backed choices.