Affinity propagation is a clustering algorithm that automatically determines the number of customer segments by identifying "exemplars" – actual data points that represent each group. Unlike traditional methods like K-means, which require you to guess the number of clusters, this approach uses a message-passing process to reveal natural groupings in the data.

Key Benefits:

- No Predefined Clusters Needed: The algorithm determines the number of clusters based on the data’s structure.

- Exemplars Instead of Averages: It selects real customers as representatives, offering clearer insights into each group.

- Handles Complex Data: Works well with clusters of varying shapes and densities, making it ideal for customer behavior analysis.

How It Works:

- Every customer is treated as a potential cluster center (exemplar).

- Data points exchange responsibility and availability messages to determine cluster assignments.

- Iterations continue until the clusters stabilize.

Applications:

- Customer Segmentation: Identifies distinct customer groups for targeted marketing.

- Personalized Recommendations: Improves product suggestions by leveraging real customer behaviors.

- Behavior Tracking: Monitors shifts in customer preferences over time.

Challenges:

- Computational Demand: High memory and processing requirements for large datasets.

- Parameter Tuning: Requires careful adjustment of preference and damping values for optimal results.

Affinity propagation is especially useful for businesses dealing with complex, unlabeled customer data. While it offers precision, its resource-intensive nature may require preprocessing or alternative methods for very large datasets.

Affinity Propagation | The Hitchhiker’s Guide to Machine Learning Algorithms

sbb-itb-2ec70df

How Affinity Propagation Works

Affinity propagation groups data points without requiring a predefined number of clusters. Instead, it treats each customer in a dataset as a potential "exemplar" – a representative data point that can define a cluster. Through an iterative process of message exchange between data points, the algorithm determines which exemplars best represent the data and assigns points to these clusters.

Two types of messages are exchanged during this process: responsibility and availability. Responsibility messages are sent from data points to potential exemplars, essentially asking, "How well can you represent me compared to others?" Availability messages flow in the opposite direction, indicating whether a data point is suitable to serve as an exemplar based on input from other data points. This back-and-forth continues until the cluster assignments stabilize.

One of the standout features of affinity propagation is that it selects actual data points as exemplars rather than calculating abstract averages. This makes the resulting clusters more grounded in real customer data.

What Sets Affinity Propagation Apart

A key feature of the algorithm is the preference parameter, which allows for fine-tuning the number of clusters. Typically, setting the preference to the median similarity among all customer pairs results in a moderate number of clusters. Adjusting the preference toward the minimum similarity reduces the number of clusters, while increasing it toward the maximum similarity produces more detailed segmentation.

Affinity propagation also excels in handling clusters with varying shapes and densities, unlike traditional methods that often assume clusters are spherical or uniform in size. This flexibility makes it particularly useful for analyzing customer behavior, which can vary significantly.

Core Algorithm Components

Affinity propagation relies on two key matrices that are updated iteratively: the responsibility matrix (R) and the availability matrix (A). The responsibility matrix measures how well-suited each potential exemplar is to represent a particular data point compared to other options. Meanwhile, the availability matrix evaluates how appropriate it is for a data point to choose a specific exemplar, considering feedback from other data points.

Pairwise similarities are calculated using negative squared Euclidean distance, with self-similarity scores influencing each customer’s likelihood of becoming an exemplar. To ensure stable updates, the algorithm uses a damping factor, ranging from 0.5 to 1.0. A higher damping factor (closer to 1.0) can help stabilize the process if convergence issues arise. These adjustments are crucial for achieving reliable cluster formation in marketing datasets.

However, affinity propagation has quadratic computational complexity, meaning it can become resource-intensive with very large datasets. To address this, preprocessing methods like dimensionality reduction or data sampling are often recommended.

Why Use Affinity Propagation for Customer Segmentation

Affinity propagation stands out as a powerful tool for marketing and business strategies. Its ability to process complex customer data without requiring manual input makes it a go-to choice for teams aiming to sharpen their targeting and boost campaign results. Here’s how it can elevate your approach to customer segmentation.

Automatic Cluster Detection

One of the standout features of affinity propagation is its ability to eliminate the guesswork in defining customer segments. Unlike traditional methods like K-means, which require you to decide the number of clusters beforehand (often based on intuition), affinity propagation allows the data itself to reveal the optimal number of clusters. This is achieved through its unique message-passing mechanism.

"AP algorithm discovers the optimal number of clusters based on the data’s inherent structure. This makes AP particularly useful when the number of clusters is unknown or when the data contains complex patterns." – Anshul Pal, Tech Blogger

This feature is particularly beneficial for exploratory market analysis, such as when launching a new product or stepping into a new market. By uncovering natural groupings within the data, the algorithm reveals subtle customer behaviors and preferences that might otherwise go unnoticed. For example, in a study focused on customer segmentation for business incubator tenants, affinity propagation outperformed other clustering methods, achieving a Silhouette Score of 0.699 and a Davies-Bouldin Index of 0.429.

Another key advantage is the use of real customer data as exemplars. These exemplars act as representative customer archetypes, offering a clear picture of behavioral patterns in your database. This makes it easier for marketing teams to create and communicate detailed customer personas to stakeholders. By understanding these archetypes, teams can lay the groundwork for campaigns that resonate more deeply with their audience.

Better Marketing Results

Effective customer segmentation translates directly into improved marketing outcomes. With more precise targeting, campaigns can achieve higher engagement rates and better ROI by aligning messages with the actual structure of your customer base.

Affinity propagation excels at identifying complex patterns, capturing subtle differences in customer behavior that simpler methods might overlook. This is especially useful for RFM (Recency, Frequency, Monetary) analysis, where the algorithm can automatically distinguish between loyal customers, at-risk buyers, and high-value spenders. Research by the algorithm’s creators has shown that affinity propagation can identify clusters with far lower error rates than other methods – and in less than 1/100th of the time for certain high-dimensional tasks.

Another strength of this algorithm is its adaptability. Since it determines clusters based on real-time data patterns rather than relying on static assumptions, it can quickly adjust to shifts in customer preferences and market dynamics. This flexibility ensures that your segmentation efforts remain relevant, even as customer behaviors evolve.

Marketing Use Cases for Affinity Propagation

Affinity propagation goes beyond identifying customer segments – it drives actionable results in marketing. Its ability to uncover natural groupings and adapt to shifting customer behaviors makes it a powerful tool for tackling real-world challenges. Here’s how businesses are leveraging it.

Building Targeted Campaigns

Traditional rule-based segmentation relies on assumptions, but affinity propagation uncovers patterns directly from the data. This allows marketers to design campaigns tailored to actual customer behaviors.

Take Optimove‘s Positionless Marketing Platform, for example. By using cluster analysis to identify unique customer personas, the platform achieved an 88% increase in campaign efficiency. Modern clustering techniques run daily on updated transactional data, enabling the discovery of hundreds of customer personas. This ensures campaigns reflect the current customer landscape rather than outdated snapshots.

"The goal of cluster analysis in marketing is to accurately segment customers in order to achieve more effective customer marketing via personalization." – Optimove

The strength of affinity propagation lies in its ability to handle complex data. It examines multiple dimensions – like purchase history, browsing habits, email interactions, and customer service data – simultaneously. This results in segments that mirror the way customers truly interact with your brand.

Beyond campaign optimization, affinity propagation also enhances product recommendations by identifying customer exemplars.

Creating Personalized Recommendations

Affinity propagation enables businesses to deliver highly tailored product recommendations by refining customer segments. Unlike K-Means, which relies on averages (centroids), this algorithm identifies actual customers as exemplars – real-life prototypes that guide recommendations based on genuine behaviors.

When paired with product affinity frameworks, the results are even more impactful. For example, bipartite graph algorithms can map relationships between customers and products, revealing clusters within customer–product networks. In one case, a graph-based system processed nearly 800,000 sales transactions in just 7.5 seconds on a standard PC. This kind of speed makes real-time recommendation engines accessible, even for mid-sized companies.

"Product affinity segmentation discovers the linking between customers and products for cross-selling and promotion opportunities to increase sales and profits." – Lili Zhang, Jennifer Priestley et al.

Affinity propagation also excels at uncovering niche customer groups that might otherwise be missed. These small, specialized segments often represent high-value opportunities, such as customers who frequently purchase complementary products or respond to specific messaging.

Tracking Customer Behavior Changes

Customer behaviors are constantly evolving, and effective segmentation must keep up. Affinity propagation’s dynamic nature ensures that segments are always up to date. Each time the algorithm runs, it recalculates clusters, reflecting the most current data. This is especially important in fast-moving markets where yesterday’s loyal customer might be at risk today.

The algorithm’s message-passing mechanism makes it highly responsive to new patterns. For instance, if customers begin purchasing different product combinations or engaging through new channels, affinity propagation adjusts automatically. This allows businesses to identify emerging trends and respond quickly, giving them an edge in competitive markets.

"If you can segment your audience into smaller, more homogenous clusters, you can craft messages, offers, and products that resonate more deeply with each segment." – Craig Does Data

To fully capitalize on this, treat segmentation as an ongoing process. Regularly update your feature set by incorporating new behavioral signals, such as "time since last login" or "churn risk score." This iterative approach ensures your marketing stays aligned with current customer behaviors, not outdated assumptions. By continuously adapting, affinity propagation strengthens both your campaign strategies and recommendation systems, forming a cornerstone of data-driven marketing.

Implementation Challenges and Limitations

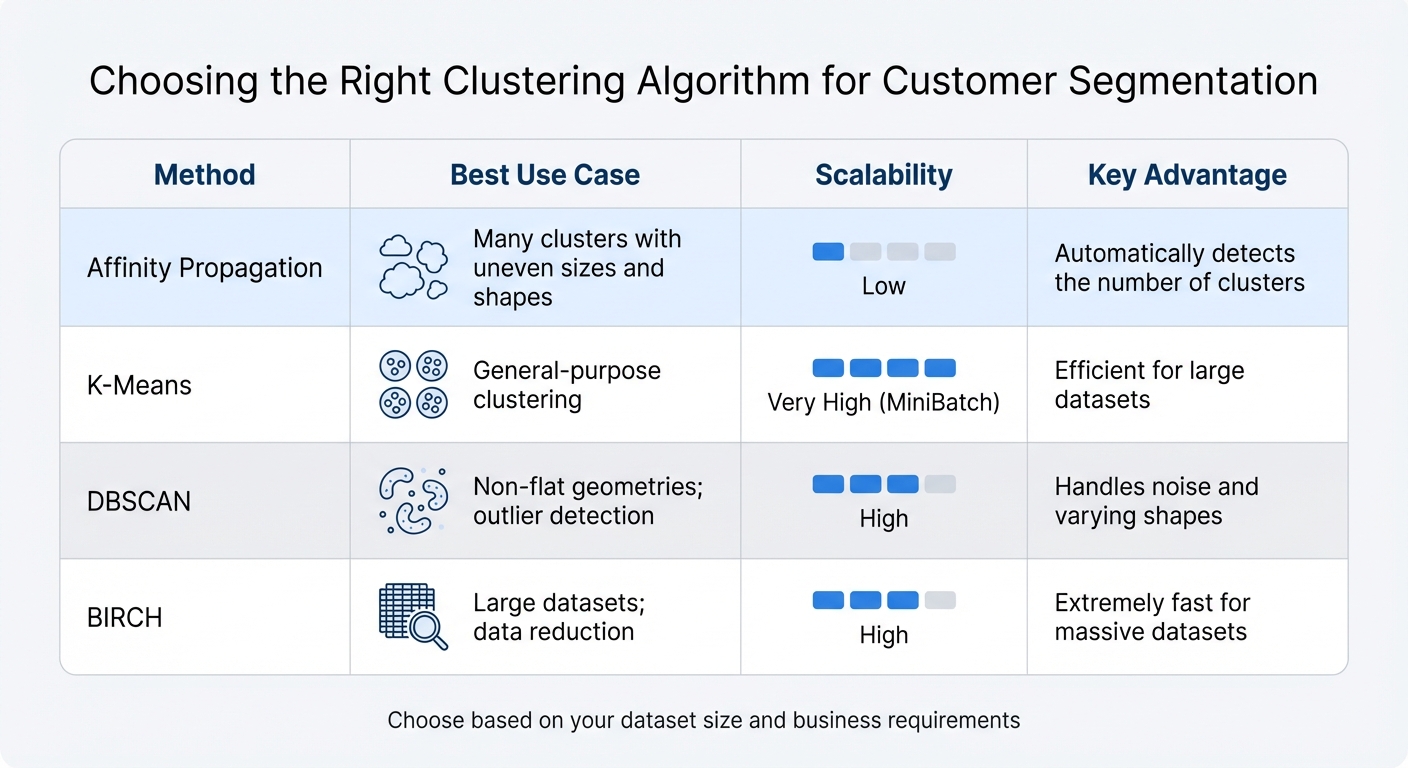

Affinity Propagation vs K-Means vs DBSCAN vs BIRCH Clustering Comparison

Affinity propagation can deliver effective segmentation results, but it comes with its own set of challenges, particularly around computational demands and parameter tuning. While it offers advantages for certain marketing applications, implementing it successfully requires careful preparation and resource management.

Computing Power Needs

One of the biggest hurdles with affinity propagation is its high computational demand, which scales quadratically with the size of your dataset. The algorithm’s time complexity is O(N² T), and its memory complexity is O(N²), where N is the number of data points. For example, doubling your customer base will quadruple both the memory and processing requirements. This is because the algorithm needs to store an N × N similarity matrix in memory.

To put this in perspective, a dataset with 10,000 customers requires storing 100 million similarity values. That’s a level of demand that can easily overwhelm the RAM of standard business computers. As Hiroaki Shiokawa from the University of Tsukuba explains:

"Although AP yields a higher clustering quality compared with other methods, it is computationally expensive. Hence, it has difficulty handling massive datasets that include numerous data objects".

For small businesses or teams lacking access to cloud-based high-memory systems, processing datasets larger than a few thousand customers may simply not be feasible. Optimized versions, like ScaleAP, can improve performance significantly, making the algorithm up to 100 times faster while maintaining accuracy. If resources are limited, consider preprocessing your data with dimensionality reduction techniques like PCA or sampling to reduce the dataset size before running the algorithm.

Setting Parameters and Preparing Data

The success of affinity propagation heavily depends on two key parameters: preference and damping.

- The preference parameter controls how likely a data point is to become a cluster center. Setting it to the median of input similarities is a common starting point, as it typically produces a moderate number of clusters. However, if your input data has very similar similarity values, setting the preference too low could result in a single cluster, while setting it too high could lead to each point forming its own cluster.

- The damping factor helps stabilize the algorithm. Insufficient damping can cause numerical oscillations, preventing the algorithm from converging. According to the scikit-learn documentation:

"When the algorithm does not converge, it will still return an array of cluster_center_indices and labels… however they may be degenerate and should be used with caution".

In such cases, results might label all data points as –1, indicating failure. To avoid this, start with a damping factor of 0.5 and adjust upward, even up to 0.9, if oscillations occur. Additionally, monitor the convergence process carefully. The algorithm typically stops after 200 iterations or when cluster boundaries remain stable for 15 consecutive iterations. Fine-tuning these parameters is essential for achieving meaningful segmentation results.

When to Choose Other Methods

Affinity propagation isn’t a one-size-fits-all solution. Its quadratic complexity makes it impractical for extremely large datasets, such as those with hundreds of thousands or millions of records. Additionally, if your marketing strategy requires a fixed number of segments – like predefined customer tiers such as bronze, silver, gold, and platinum – the automatic cluster detection feature of affinity propagation might work against you.

In these scenarios, simpler algorithms like K-Means may be a better fit. For instance, the MiniBatchKMeans variant is specifically designed to handle large datasets efficiently.

| Method | Best Use Case | Scalability | Key Advantage |

|---|---|---|---|

| Affinity Propagation | Many clusters with uneven sizes and shapes | Low | Automatically detects the number of clusters |

| K-Means | General-purpose clustering | Very High (MiniBatch) | Efficient for large datasets |

| DBSCAN | Non-flat geometries; outlier detection | High | Handles noise and varying shapes |

| BIRCH | Large datasets; data reduction | High | Extremely fast for massive datasets |

For smaller teams or businesses with limited resources, starting with simpler methods like K-Means and transitioning to advanced approaches as your technical capacity grows can be a more practical path. Additionally, for specific tasks like protein interaction graph partitioning, research has shown that other methods, such as Markov clustering, can outperform affinity propagation.

Ultimately, balancing computational requirements with segmentation goals is critical for building effective, data-driven strategies in resource-constrained environments.

Adding Affinity Propagation to Your Marketing Analytics

Affinity propagation truly shines when it’s part of a larger marketing analytics framework. Using it in isolation limits its potential; its real value emerges when combined with tools like RFM analysis and customer journey mapping. Together, they create a richer, more detailed picture of your customer base by automatically uncovering natural clusters within your data.

To get the most out of affinity propagation, integrate it early in your analytics process. This allows you to identify key features before applying more advanced models. Studies show that combining affinity propagation with other methods can significantly improve clustering accuracy.

This algorithm also plays a key role in Customer Relationship Management (CRM). By analyzing the average RFM (Recency, Frequency, and Monetary) values within each segment, affinity propagation helps define detailed customer personas and refine CRM strategies. For example, in one study, the algorithm identified four distinct customer segments based on RFM variables, achieving a Silhouette Score of 0.699 and a Davies-Bouldin Index of 0.429 – metrics that reflect strong clustering performance.

Improving Customer Journey Mapping

When it comes to customer journey mapping, affinity propagation offers a unique advantage: it identifies exemplars. Unlike K-means, which relies on calculated centroids, affinity propagation selects actual data points as representatives of each cluster. These exemplars represent real customers, providing clear insights into typical behaviors across touchpoints.

For instance, you might discover that your "at-risk" segment includes customers with high monetary value but low recency scores. This insight could guide targeted re-engagement campaigns at critical touchpoints, helping you address their needs before they churn. By integrating these findings, your team can respond more quickly to changing customer behaviors.

Responding to Market Shifts

Affinity propagation is particularly useful during times of market volatility. Its ability to automatically determine the optimal number of clusters means you’re not limited to pre-defined categories. This flexibility allows the algorithm to uncover new customer behaviors without manual adjustments.

When entering a new market or navigating uncertain conditions, affinity propagation can help you explore emerging customer segments. Adjusting the preference parameter can fine-tune the level of detail in your clusters, revealing niche behaviors during market shifts. For example, during a period of economic change, you might identify a new segment of price-sensitive customers. This insight can immediately influence your pricing and promotional strategies. Research has shown that affinity propagation is effective in tracking temporal patterns and structural shifts. A study covering 1997–2017 demonstrated how the algorithm identified changes in market behaviors and multipliers over time.

At Growth-onomics, we incorporate techniques like affinity propagation into our marketing analytics framework to create actionable strategies that stay aligned with shifting market dynamics.

Conclusion

Affinity propagation offers a powerful way to enhance your marketing strategy by simplifying and improving customer segmentation. Unlike traditional methods like K-means, this algorithm automatically determines the optimal number of clusters, eliminating the need for trial-and-error. By identifying exemplars, it creates clear, real-world customer profiles, making persona development more straightforward.

Research highlights its effectiveness, achieving a Silhouette Score of 0.699 and a Davies-Bouldin Index of 0.429, which underscores its strong clustering performance. When applied to RFM analysis, it efficiently groups customers based on their purchasing behavior, helping businesses design targeted CRM strategies and allocate marketing budgets more effectively.

This approach is scalable, capable of handling millions of customers, and adaptable to changing market trends. By tweaking the preference parameter, marketers can adjust the level of detail in clustering to uncover niche customer behaviors as conditions shift.

For marketers dealing with unlabeled customer data, affinity propagation provides an unbiased, data-driven way to identify natural groupings. Whether you’re crafting targeted campaigns, offering personalized recommendations, or monitoring behavioral shifts, this algorithm equips you with the insights needed to make informed decisions. Integrating affinity propagation with tools like RFM analysis or customer journey mapping can further enhance your ability to deliver precise and responsive marketing strategies.

FAQs

What data should I use for affinity propagation segmentation?

Affinity propagation segmentation relies on pairwise similarity data between data points. This typically involves similarity matrices derived from feature comparisons or distance metrics. By using these inputs, the algorithm can pinpoint clusters and group individuals or items with shared characteristics efficiently.

How do I choose good preference and damping values?

To choose preference values, keep in mind that higher values lead to more clusters, while lower values result in fewer clusters. The default is usually set to the median of the input similarities. For damping, it’s recommended to start with the default value of 0.5, which helps prevent oscillations. If you notice instability or the algorithm fails to converge, try increasing the damping value (for instance, to 0.9) and/or raising the maximum number of iterations. Fine-tune these settings based on your data and watch for any warning messages.

How can I run affinity propagation on large customer datasets?

Affinity propagation can demand significant computational resources, especially with large datasets, because it involves quadratic message updates. To tackle this, you can turn to methods like ScaleAP, which trims down redundant computations, or use sampling strategies to cut back on the number of pairwise similarity calculations. Pair these techniques with optimized libraries like scikit-learn, and you’ll find affinity propagation becomes much more efficient and practical for handling big data.